New tech doesn’t only bring new opportunities, it also often brings plenty of potential pitfalls. When it comes to Machine Learning, privacy is one of those challenges that quickly come into play. At In The Pocket, thinking about the privacy of our users while applying machine learning is not an afterthought. We recently considered the value of analyzing a video stream that records human activity in our offices. We asked ourselves: how can we safely work with such sensitive data collected, without infringing people’s privacy?

Computer vision has been around for quite some time but has gotten a real boost lately from developments in machine learning and has many interesting applications, from smart speed cameras to monitoring safety on factory floors. Edge computing offers the possibility to run heavy applications and AI models on devices without having to depend on the cloud for computing power. The collected data doesn’t have to leave the device anymore — which mitigates privacy risks.

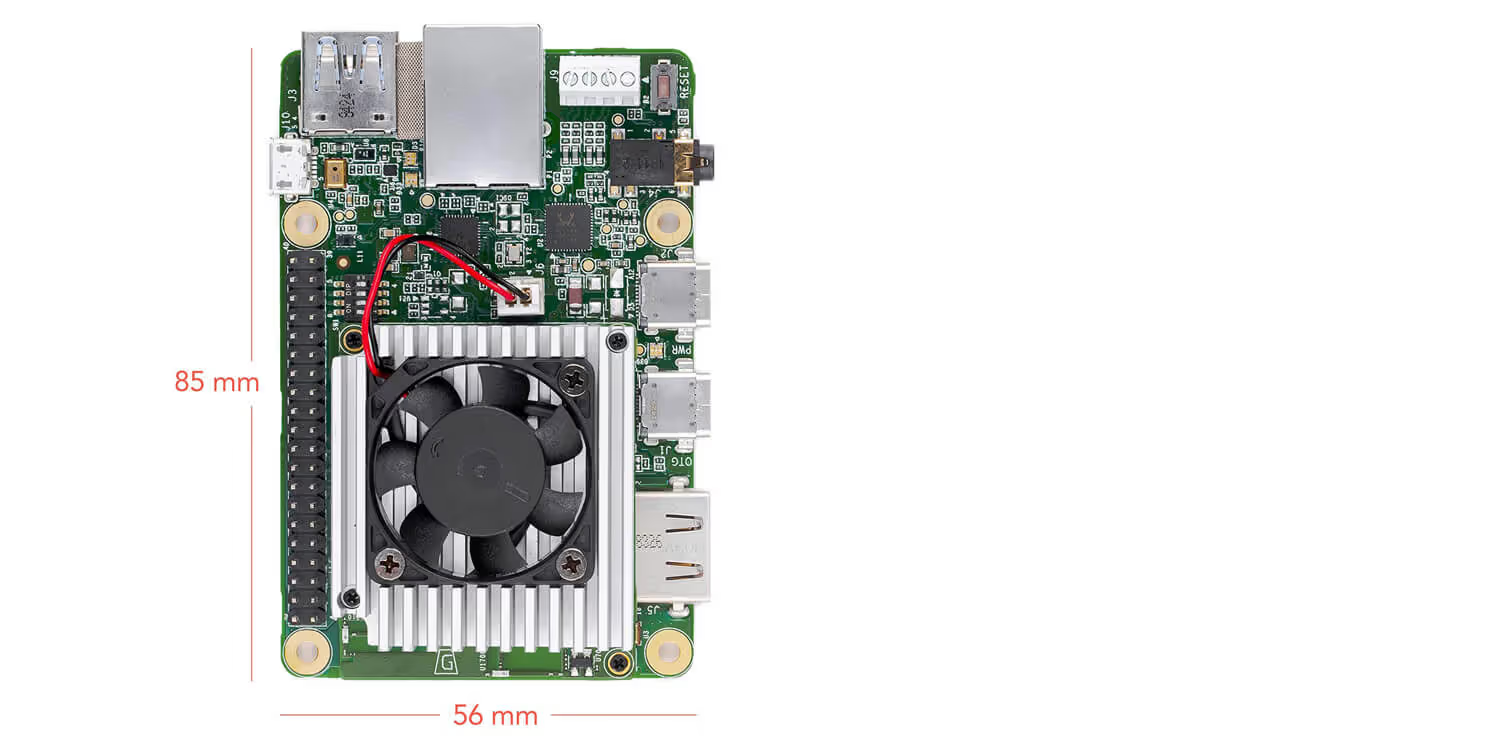

Coral Dev Board

An example of Edge computing is Google’s Coral Dev Board, which carries a Google Edge TPU (TensorFlow Processing Unit). It is a development board that retails for $149.99 which allows you to quickly prototype on-device ML (Machine Learning) applications. Although this device is democratically priced, it packs some serious computing power with its Edge TPU chip. Because of the presence of an onboard microSD slot, Gigabit Ethernet, audio and video output, GPIO pins and both Wi-Fi and Bluetooth, it offers enough possibilities for even the most ambitious prototypes. You can compare it to a Raspberry Pi, but with ML superpowers.

Privacy in the Information Age

Since GDPR (General Data Protection Regulation) is in effect in Europe, privacy is a hot topic. Collecting data in a sustainable fashion continues to be a challenge for companies. When we were scoping the possibilities in the industry to collect data in a way that respects privacy and anonymity, we quickly came up with the idea to analyze data from a camera feed. Cameras are readily available in various places and allow us to get insights with minimal effort and cost. The prerequisites to be privacy compliant are that the camera feed can’t be stored or leave the device while collecting data, as this ensures sensitive image data can’t be compromised.

Collecting visual data with Edge TPU

As a test case, we wanted to understand the activity of the kitchen and lunch area of our offices. Have a look at our office space:

As Coral Dev Boards are capable of running demanding Machine Learning models, we used an off the shelf, pre-trained object detection model called SSD Mobilenet. This model was trained on 90 objects from the COCO dataset including a category which includes humans. A USB webcam was connected to the dev board and a Python script that pushes the number of people detected to Google Stackdriver every second was deployed on it. Stackdriver dumps these logs into Google BigQuery so we can perform queries against the logs and we can analyse the data in Python. As a Google Cloud Partner, we love how we can quickly build prototypes and products using the Google Cloud Platform. As already stated, privacy is a big gain from this approach. The camera feed never gets displayed, it doesn’t leave the device and it doesn’t get stored anywhere. Created logs are also completely anonymous. We placed the experiment in our offices and pointed the camera to the area we wanted to understand, to see if we could extract some interesting insights and let it collect data for a couple of weeks.

So what did we found out about our office?

The “people detector” from our experiment ran about 3 weeks. Even in this relative short period, we managed to get some interesting insights from the collected data:

First of all, when we plot the complete dataset on a bar chart, we see that the peaks of the number of detected persons increases towards the end of the dataset. Given the fact that the weather was very nice the first 10 days of the data capturing, and our colleagues like to work and lunch outside on the terrace, could be an explanation for these results. The last 2 weeks of data capturing the weather was cold and rainy and we were “forced” to eat our lunches inside in the lunch/kitchen area. We can clearly see holidays represented on the graph, as well as some construction work that takes place on Saturdays due to the renovation of our offices.

When we plot the average number of people during the day for each day of the week, we can see some other patterns emerge:

We can see a big spike during lunchtime. This is no surprise since that’s the time when there is the most activity in the kitchen area. On Friday, we tend to stick around for some after work drinks, starting at 17h and the data clearly shows that.

What's next?

After taking a closer look at the collected data, we can imagine training models on collected data for predictive purposes. For instance, we could train a model on this data that predicts the number of people present at a certain moment in the future. Check the scheduled occupancy against the real time occupancy of meeting rooms and auto-cancel meetings where needed or analyse if the right meeting room size was booked in comparison to the number of people present in the meeting. When we translate a use case like this to smart cities, we could track traffic in certain areas by monitoring pedestrians, cyclists and motorists. This data could then be used for analytics, but also to predict heavy traffic or the flow of people when events are hosted in the city.

We’ve only scratched the surface of possibilities with Edge Computing and sustainable computer vision. Our anonymous people detector helps us better understand our work environment and enables us to manage it more effectively. This demonstrates the potential of running computer vision on edge devices. Its applications are beginning to unfold in every industry that leverages computer vision under privacy constraints.

.avif)