Imagine buying these smart glasses everyone has been talking about. At first you were hesitant, but today, you decided it was time to finally try them out yourself. You go to the supermarket and start filling your shopping cart with all sorts of things to get through the remainder of the day. While doing so, tiny cameras inside the glasses are registering your surroundings and see exactly which things you are buying. At the exit, you take the “special glasses” lane, just like you did when you walked in. There is no scanning process, no queues and you basically just walk out feeling like a cat burglar. While walking out, the glasses already sent the content of the shopping cart to the supermarket’s server and paid the receipt immediately with the credit card you linked to the glasses. After putting everything in your car, you hop in and head home. While driving the glasses ping you that you are running low on fuel. You stop by a gas station and after you refueled, your glasses, again, take care of the paying process.

This is one of the results that could make our (daily) life easier if computers were able to see and interpret things as we humans can. Actually, they could be even more performant than us because they could consistently foresee certain problems; you wouldn’t be the first person whose car breaks down because you didn’t notice you were running out of gas or put the wrong type of fuel in your car.

But computers cannot (yet?) see, interpret and reason as humans do. Computer vision aims to close this skill gap in one of our five senses: sight. Recent advances show that we are closer than ever. They involve huge “black-box” neural networks meaning that their exact internal workings are abstract and not well understood. Fortunately, tools (LIME, SHAP, tf-explain, etc.) exist to fathom such a network and get a grasp on why and how it has learned certain internal representations of images. These tools permit data scientists and engineers to better understand their precious models and check their performances.

'If the lavishly used metaphor “data is the new oil” is more or less true, then labeled data must be the new diamond.'

Labeled data is much rarer and getting it is time-expensive and costly, but necessary for supervised learning tasks. Asian human labeling farms spawn like hornets round a hornets’ nest and are not the only competitors on the market. Big players such as Google and Amazon offer human labeling too, sometimes involving quite a number ethical issues. For Google’s human labeling service you pay a minimum of 98$ to label (35$) and add bounding boxes (63$) per 1000 images at the time of writing. Doing this for 30 classes consisting of 1000 images each will cost you about 3000$. In the current flow of machine learning, having a hand on labeled data is imperative. As the father of convolutional neural networks, Yann LeCun, stated at NIPS 2016; […] A key element we are missing [to get to truly intelligent machines] is predictive (or unsupervised) learning: the ability of a machine to model the environment, predict possible futures and understand how the world works by observing it and acting on it [without having prior information like with labeled data sets — supervised learning]. In certain branches of computer vision, especially object detection, open source projects have made the supervised way of learning a commodity, but building such a project with your own raw (unlabeled) data is very much less so.

Why

Wouldn’t it be cool if we could use interpretability tools to not only interpret our black-box models, but also to tighten the gap between supervised and unsupervised learning? At In The Pocket, we came up with a idea to use the interpretability tool LIME as an auto-labeling tool in multi-class object detection frameworks. How our pipeline operates is explained below. Auto-labeling replaces the act of humans labeling images (hereby reducing costs), is faster, and also incorporates a sense of standardisation, because no two humans would annotate pictures of cats exactly the same way as opposed to computers. Also, looking at the future, computation power will increase and get cheaper while the speed of human labeling will probably not.

What we did

To prove our case involving 1000 images for 30 classes, we’ve filmed 3 types of fruit (banana, kiwi, and apple) for about 3 minutes each, in different lighting circumstances and angles. We recorded at 24 fps, and with a resolution of 3740 by 2160. We deliberately chose not to include limes in the data set as we suspect LIME might be biased to detecting limes.

The first part in our pipeline consisted of transfer learning with MobileNetV2 of which the first 33 layers were kept and frozen. Two fully connected layers were placed on top. For training, validation, and test data we used every fifth frame of the recorded videos and downsampled them to 224 by 224 images. Following the rules of the book, we ended up with a fully trained image classification model.

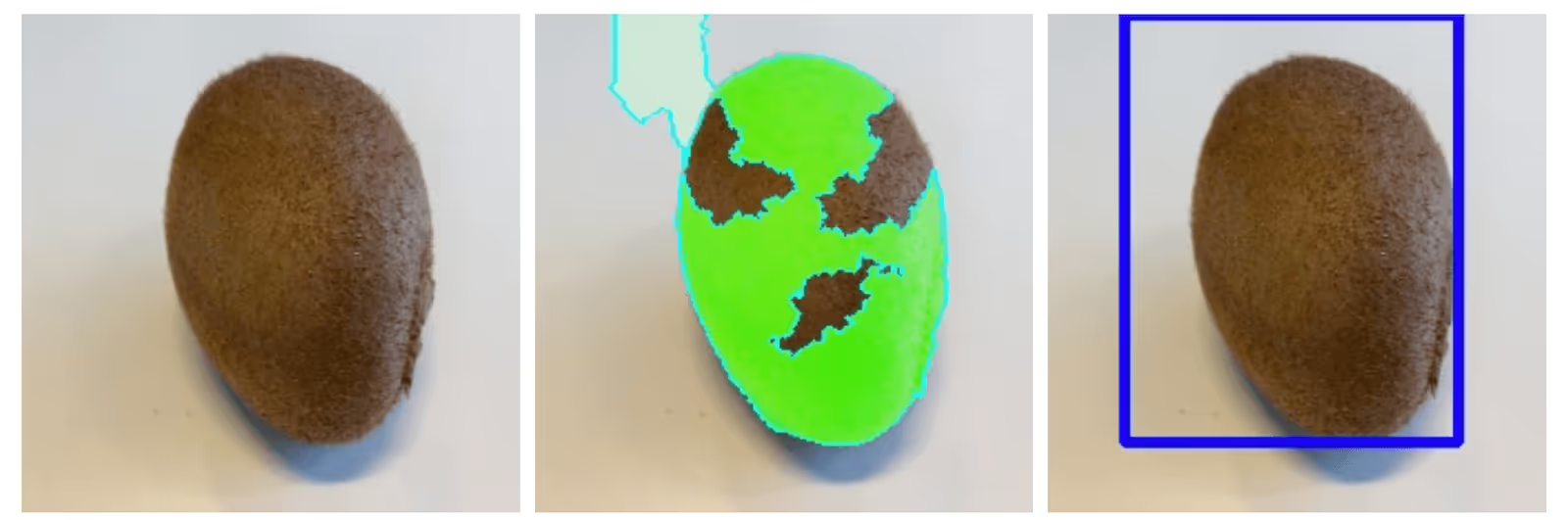

All images (about 2600) were then analysed with LIME, using default parameters, to interpret the model. Using LIME’s positively activated superpixels (regions of the image which makes the classifier believe that the picture belongs to a certain class), we were able to put bounding boxes around the class of interest. Resulting visualisations to clarify the process are shown in the figures below.

The right pictures are the original resized picture, the ones in the middle the Lime positive activation and the ones on the right show the bounding box.

Running LIME and constructing the bounding boxes for 2600 pictures took 4 hours and a half on Google Cloud’s Nvidia Tesla K80. We ended up with bounding box coordinates, and knowing that our trained model correctly classifies the pictures as being apples and kiwis, based on the right features (the features that make up the apple and the kiwi). We then converted the (auto-labeled) bounding boxes to the original dimensions.

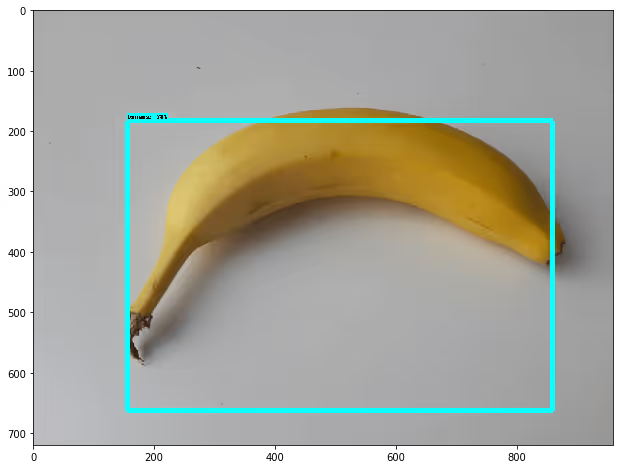

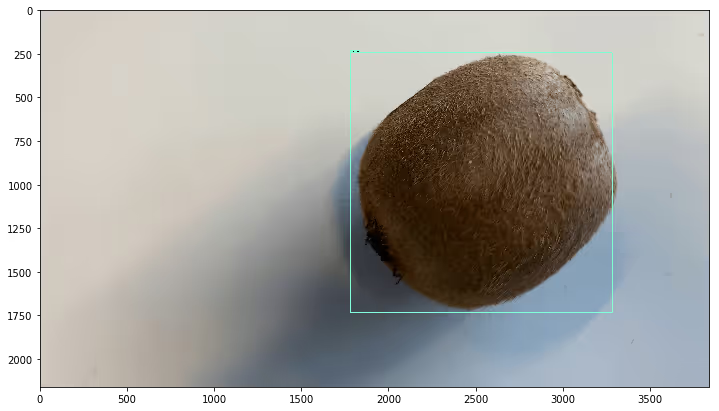

Because the object detection framework we used is running on TensorFlow, we had to change the format of the original images and corresponding bounding boxes to TensorFlow’s proper format called TFrecords. Now we were ready to train the object detection model on TPU’s on Google Cloud. Here, we used MobileNetV1 SSD and the tensorflow object detection API. The benefit of training an object detection model at this stage is that we put the knowledge of the bounding boxes in a model that can do inference way faster than LIME itself (generating bounding boxes via lime takes about 2 minutes on our local machine, while generating bounding boxes via an object detection model takes about 2 seconds). Also, the object detection model generalized pretty well and managed to get the bounding boxes even better than before in some cases. On the figure below, copied from tensorboard, an example is shown for a validation instance (kiwi in this case). You might have to quench your eyes a little to see the bounding boxes.

Results of inference on the trained model when we feed it a novel image of a one-class picture works fine, as shown in the two figures below.

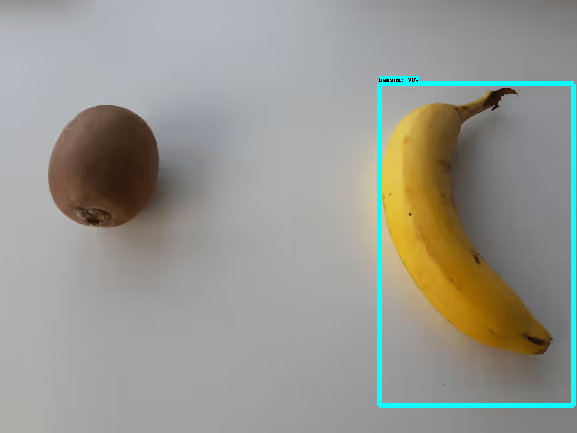

For multiple object detection, our pipeline as of now is not 100 % able to do what we would like. At times, the object detection model is able to tell that both the kiwi and banana are in the picture. Other times, the bounding box is right for one class but the other goes unnoticed.

Next steps

This post primarily aims to proof the concept that our whole pipeline is capable of single object detection via an intermediate auto-labeling step starting from movies (just pictures are also possible) of particular classes only. The amount of classes could be extended to 30 or more. We were able to generate quite trustworthy bounding boxes, with the default LIME’s parameters. For specific problems and data sets, finetuning LIME’s parameters should increase the performance and make it possible to start counting objects in pictures of detect multiple objects in the same picture. Another next step involves replacing MobileNetV1 SSD by its improved successor MobileNetV2 SSD that came out last year to get better results and a higher mAP (mean average precision) score on the ground truth auto-labeled bounding boxes in the object detection model.

Outro

Using LIME’s interpretability properties in image classifiers, we managed to automatically label class-specific data sets and train an object detection pipeline with. This method completely eliminates the cost of human labeling labor, which quickly runs into thousands of dollars.

It should also be noted that even though there are lots of advantages of using this way of working, quality of bounding boxes could possible be underwhelming for certain data sets involving small objects. The quality of the bounding boxes propagates through the object detection models and affects the overall performance. But in use-cases such as the one we showed, significant speed-ups are possible due to the parallel nature of LIME inference, and even more so when using stronger GPU’s than the K-80 we used. If given the option, no one would wait two days before some human labeling farm returns them annotated pictures when LIME could do the same in a couple of hours, is cheaper and does not involve ethical issues, right?

We already stated that labeled data must be the new diamond. Stop swinging those pickaxes. Who would’ve thought that such a trivial type of fruit as a lime would become one of the best tools to mine diamonds?